magic addition machines

Introduction to Logic

Deep Springs College

February 2026

“Logic!,” I said. “We thought it was about math and philosophy and searching for truth—but my Silicon Valley bait-and-switch is that it’s actually about building computers!”

This is my memorialization of a diversion into digital electronics we took during an Introduction to Logic class I taught at Deep Springs College in Spring 2026. We “hacked” our knowledge of logical operators, reinterpreting “true” and “false” as “0” and “1”, and gluing together zeroes and ones to represent big numbers as binary sequences. Over the course of a couple weeks we built what electrical engineers call a half-adder, then a full adder, then a multi-bit adder, and finally, as the sole problem on our midterm exam, a three-bit multiplier. In other words, using only the logical AND and XOR functions, we figured out how to mechanically multiply together any two numbers from \(0\) to \(7\).

We did lots of other stuff simultaneously: we thought about a cornucopia of exploratory/inquiry-based propositional logic problems I wrote, and we worked through W.V.O. Quine’s 1950s textbook Methods of Logic. The earlier parts of the class overlapped with a seminar on Wittgenstein’s Tractatus that several students were also in; the electronics stuff overlapped with an Analog Electronics class that several other students were in. Cowboy synergy!

I owe inspirational credit to former colleague Wes Chao, who, when I was teaching baby logic to ninth-graders during covid, suggested we play Nandgame, an interactive online game based on the classic text/course Nand2Tetris, which walks the students through building a working game of Tetris (and computer) starting from nothing but basic logic gates. Brian Hill, Deep Springs’ long-term physics professor, also provided helpful ideas (and stories of trying to work through, for fun, the schematics of the HP-35, the first commercially successful scientific calculator).

Below are a selection of the electronics-relevant questions from our (twice-weekly) problem sets, plus my detailed notes on the key problems.

- finger-counting in binary

- addition (overwhelmingly)

- addition (back to basics)

- addition (but in binary)

- addition (using logic!)

- so how to make this magic addition machine?

- adding two big numbers together

- adding four small numbers together

- keevan’s four-bit adder

- why all the XOR’ing?

- ~~~magic multipliers~~~

finger-counting in binary

I casually mentioned in class that the logical functions we were learning about also are the basis of all computers. To the Great Booksy humanities nerds at Deep Springs this was a revelation! In preparation for learning baby electronics, I had the kids review base-2, by teaching them how to count on their fingers in binary. From PS #6:

Count on one hand to \(32\).

And later on PS#6:

Count on one hand to 32, again.

And from PS # 7:

Teach someone ELSE to count on their hands in binary! Include a video for proof. It can’t be the same person—in other words, since there are six of you, by Monday the ninth, there should be TWELVE DS’ers who can count on their fingers in binary! Extra points if the video is at the Sump.

Here’s an in media res excerpt from the video proof of Sasha having taught Kel how to count in their hands in binary (as Lucas watches):

Once we had started learning about quantifiers and there were applicants/prospective students on campus, I put this on PS#9:

Standing extra credit if you can teach binary finger counting to a staffulty member (only half points if it’s Rachel or Brian). Also: \[\left( \exists x \in \substack{\text{the set of applicants visiting }\\\text{Deep Springs this weekend}}, \text{ such that } \substack{x\text{ can't count in}\\\text{binary on their hands}\\\text{by the end of the weekend}} \right) \implies \left( \forall y, \,\,y \in\text{logic class } \implies y \text{ will fail } \right) \]

addition (overwhelmingly)

From problem set #7:(This problem is big and exploratory; I want you to attack it as much as you can; I’m not sure how far you’ll get!)

CAN YOU BUILD A ROBOT?!?!?!? Or at least, a logical machine that adds together some small numbers? (Baby steps!)

Suppose we want to add together two numbers, both of which are between \(0\) and \(3\), inclusive. We can represent each number as a binary number with two digits, so we can model that with two truth variables each. (Note useful vocab word: bit, short for binary digit, zero or one, finger up or down, true or false.). More specifically:

- let’s say that \(P\) and \(Q\) represent the digits of the first number, such that \(Q\) represents the \(2^0\) digit and \(P\) the \(2^1\) digit. So then we can represent the numbers \(0\) to \(3\) as:

- \(0 = 0\cdot 2^1 + 0\cdot 2^0 = 0\cdot 2 + 0 \cdot 1\): \(P\) false, \(Q\) false.

- \(1 = 1\cdot 2^1 + 1\cdot 2^0 = 0\cdot 2 + 1 \cdot 1\): \(P\) false, \(Q\) true.

- \(2 = 1\cdot 2^1 + 0\cdot 2^0 =1\cdot 2 + 0 \cdot 1\): \(P\) true, \(Q\) false.

- \(3 = 1\cdot 2^1 + 1\cdot 2^0 = 1\cdot 2 + 1 \cdot 1\): \(P\) true, \(Q\) true.

- Likewise, let’s say that \(R\) and \(S\) represent the digits of the second number in the same way, with \(R\) being the \(2^1\) digit and \(S\) being the \(2^0\) digit.

If we add these two numbers together, the result can be between \(0\) and \(6\), inclusive, meaning we need three binary digits for the output. So we need a set of three logical functions, each representing one of the three digits.

So, for example, if we think about how we’d add 2 + 1 in this symbolism, we’d have

- let the first number be \(2\), so we have \(P=1\), \(Q=0\), or just \(P=T\), \(Q=F\)

- the second number is \(1\), so we have \(R=0\), \(S=1\), or just \(R=F\), \(S=T\)

- the output is \(3\), which is \(0\cdot 2^2 + 1\cdot 2^1 + 1 \cdot 2^0\), so our three output values are \(0\), \(1\), and \(1\), or just \(F\), \(T\), and \(T\)

So, for example, the line in the truth table corresponding to \(2+1=3\) might look like:

first number second number their sum \(P\) \(Q\) \(R\) \(S\) third output bit

(i.e., \(2^2\) coefficient)second output bit

(i.e., \(2^1\) coefficient)first output bit

(i.e., \(2^0\) coefficient)“\(2 + 1 = 3\)”: 1 0 0 1 0 1 1 Or with \(T\)’s and \(F\)’s:

first number second number their sum \(P\) \(Q\) \(R\) \(S\) third output bit

(i.e., \(2^2\) coefficient)second output bit

(i.e., \(2^1\) coefficient)first output bit

(i.e., \(2^0\) coefficient)“\(2 + 1 = 3\)”: T F F T F T T So somehow, we need to come up with three functions for each of these three output bits, such that the three output functions correspond to adding together the input numbers! We want to write each of these three output functions in terms of standard logical connections—if, not, or, and, etc.

I’ve never built this up before, so I don’t have a ton of pointed suggestions, other than to DIVE IN! Perhaps one first place to start would be to write out all \(16\) rows of the truth table, so you can at least know the output each of the three output functions gives. But of course the point is to then find a logical function of \(P\), \(Q\), \(R\), and \(S\) combines those four variables in ways that correspond to adding these two binary numbers together. Some questions/ideas/provocations: can you tell if the output number is even? can you make an even simpler thing that rather than adding two numbers \(0\) to \(3\), just adds two numbers \(0\) to \(1\) (i.e., it just needs one bit for each number)?

I wrote this problem before I had a detailed understanding of how adders work; my main goal was to give the kids something huge and overwhelming for them to get lost in. Success! They tried very hard to make sense of this setup; they didn’t come up with working functions, but were curious and excited and intrigued (which was my intent). Huge, unstructured problems tend to be unsuccessful if you want to achieve (or want the kids to achieve) a specific goal—but they can be a good way of provoking curiosity and creativity.

addition (back to basics)

From problem set #7:Add, by hand, using the elementary school algorithm where you carry the digits and whatnot—I haven’t done this since elementary school either; I swear I have a good reason for asking you to do this!—the numbers:

\[\begin{array}{r} 345{,}648 \\ + \quad 92{,}905 \\ \hline\\ \end{array}\]

In high school, you added together two- and three-digit numbers. Now we’re in college. Now it’s time to add together SIX-DIGIT NUMBERS!

In particular, suppose we want to add these numbers: \[\begin{array}{r} 345{,}648 \\ + \quad 92{,}905 \\ \hline\\ \end{array}\] How do we do it??? Using our beloved elementary-school algorithm, we start at the right side (the smallest place value digit), and work to the left (to the largest). Starting out, \(8+5\) is \(13\), so we’ll put down a \(3\), and then carry/overflow the \(1\) into the next column: \[\begin{array}{r} {\color{gray} 1}\phantom{0} \\ 345{,}648 \\ + \quad 92{,}905 \\ \hline 3 \end{array}\] Next, we add together all three of these numbers in the tens column. We add not just \(4\) and \(0\), but also the carried/overflowed \(1\) from the top. So \(1+4+0\) is \(5\), so we’ll put that down. There’s nothing to carry; equivalently, we can imagine “carrying” a zero: \[\begin{array}{r} {\color{gray} 0 }{\color{gray} 1}\phantom{0} \\ 345{,}648 \\ + \quad 92{,}905 \\ \hline 53 \end{array}\] Same computation again: we add all three of the numbers in the hundreds column; the two numbers from the hundreds places of our original addends (fun word!), plus the carry digit (which is just zero). So \(0+6+9\) is \(15\), so we’ll put the \(5\) down and carry the \(1\): \[\begin{array}{r} {\color{gray} 1 }\phantom{,}{\color{gray} 0 }{\color{gray} 1}\phantom{0} \\ 345{,}648 \\ + \quad 92{,}905 \\ \hline 553 \end{array}\] Then \(1+5+2\) is \(8\), no carry, so we’ll just put the \(8\) down (and “carry” a zero): \[\begin{array}{r} {\color{gray} 0 }{\color{gray} 1 }\phantom{,}{\color{gray} 0 }{\color{gray} 1}\phantom{0} \\ 345{,}648 \\ + \quad 92{,}905 \\ \hline 8,553 \end{array}\] Same procedure. \(0+4+9\) is \(13\), so we’ll put down the \(3\) and carry the \(1\): \[\begin{array}{r} {\color{gray} 1 }{\color{gray} 0 }{\color{gray} 1 }\phantom{,}{\color{gray} 0 }{\color{gray} 1}\phantom{0} \\ 345{,}648 \\ + \quad 92{,}905 \\ \hline 38,553 \end{array}\] Finally, \(1+3\), plus the invisible zero in front of \(92,905\), is \(4\): \[\begin{array}{r} {\color{gray} 1 }{\color{gray} 0 }{\color{gray} 1 }\phantom{,}{\color{gray} 0 }{\color{gray} 1}\phantom{0} \\ 345{,}648 \\ + \quad {\color{gray} 0}92{,}905 \\ \hline 438,553 \end{array}\] Yay! So we have our addition: \[345,648 + 92,905 = 438,553\] Note that we did the same procedure over and over. At the core, we were just doing a procedure/algorithm/machine that:

- takes in three single-digit numbers

- spits out two single-digit numbers

Or, in more detail, a procedure that:

- takes in three single-digit numbers

- two digits from the two original numbers we’re adding

- one carry/overflow digit from the previous step

- spits out two single-digit numbers

- one “ones” digit

- and one “tens” digit that we carry/overflow into the next step

We can think of adding big numbers like this together as just

stringing together a bunch of identically-operating magic addition

machines! Visually, here’s how that might look. First we input \(8\) and \(5\). The magic addition machine gives us

\(13\) as the sum, so we put down a

\(3\), and carry a \(1\): Then, in the next step, we have three numbers to

add together: the carried \(1\), and

then the \(4\) and the \(0\). The magic addition machine tells us

their sum is \(5\), so we put that

down, and “carry” a zero:

Then we add the next three

numbers together. Zero plus six plus nine is \(15\), so we put down a \(5\) and carry a \(1\):

Et cetera:

Et cetera:

And finally:

So if we have this magic addition machine that

can take in three single-digit numbers, and spit out two single-digit

numbers (or, equivalently, one two-digit number), then we can copy and

paste that machine over and over again (… like I did in Adobe

Illustrator to make that image) to add together numbers as big as we

want! How exactly that magic addition machine works is a different

story. Perhaps it memorizes addition tables, or something like that?

Regardless, if we can add together small numbers, we can use

that to add together arbitrarily big numbers.

addition, revisited, but in binary

If we’re trying to add numbers in binary, the deal is exactly the same! It’s the same algorithm—the only thing that’s different is how the digits work.

For example, let’s add \(345\) and

\(82\). I guess we have to convert them

to binary first. We have: \[\begin{align*}

345 & = 256 + 64 + 16 + 8 + 1 \\

&= 2^8 + 2^6 + 2^4 + 2^3 + 2^0 \\

&= 1\!\cdot\! 2^8 + 0\!\cdot\! 2^7 + 1\!\cdot\! 2^6 + 0\!\cdot\! 2^5

+ 1 \!\cdot\! 2^4 + 0\!\cdot\! 2^3 + 0\!\cdot\! 2^2 + 0\!\cdot\! 2^1 +

1\!\cdot\! 2^0 \\

&=

\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1}\mathtt{0}\mathtt{0}\mathtt{1}

\end{align*}\] And: \[\begin{align*}

43 & = 32 + 8 + 2 + 1 \\

&= 2^5 + 2^3 + 2^1 + 2^0 \\

&= 1\!\cdot\! 2^5 + 0 \!\cdot\! 2^4 + 1\!\cdot\! 2^3 + 0\!\cdot\!

2^2 + 1\!\cdot\! 2^1 + 1\!\cdot\! 2^0 \\

&= \mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1}

\end{align*}\] So to add then, we’ll have: \[\begin{array}{r}

\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1}\mathtt{0}\mathtt{0}\mathtt{1}\\

+ \mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1} \\

\hline \\

\end{array}\] OK, let’s do this! From the right, the first

column, we have \(\mathtt{1}+\mathtt{1}=\mathtt{10}\), so

we’ll put a \(\mathtt{0}\) down and

carry the \(\mathtt{1}\): \[\begin{array}{r}

{\color{gray}\mathtt{1}}\phantom{\mathtt{1}} \\

\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1}\mathtt{0}\mathtt{0}\mathtt{1}\\

+ \mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1} \\

\hline

\mathtt{0}

\end{array}\] Then next, we have \(\mathtt{1}+\mathtt{0}+\mathtt{1}\), which

is \(\mathtt{10}\), so we’ll put a

\(\mathtt{0}\) down and carry the \(\mathtt{1}\): \[\begin{array}{r}

{\color{gray}\mathtt{1}} {\color{gray}\mathtt{1}} \phantom{\mathtt{1}}\\

\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1}\mathtt{0}\mathtt{0}\mathtt{1}\\

+ \mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1} \\

\hline

\mathtt{0}\mathtt{0}

\end{array}\] Then \(\mathtt{1}+\mathtt{0}+\mathtt{0}\) is \(\mathtt{1}\), so we’ll put that down, and

``carry’’ a zero: \[\begin{array}{r}

{\color{gray}\mathtt{0}} {\color{gray}\mathtt{1}}

{\color{gray}\mathtt{1}} \phantom{\mathtt{1}}\\

\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1}\mathtt{0}\mathtt{0}\mathtt{1}\\

+ \mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1} \\

\hline

\mathtt{1}\mathtt{0}\mathtt{0}

\end{array}\] Then we have \(\mathtt{0}+\mathtt{1}+\mathtt{1}\), which

is \(\mathtt{10}\), so we’ll put down a

\(\mathtt{0}\) and carry that \(\mathtt{1}\): \[\begin{array}{r}

{\color{gray}\mathtt{1}} {\color{gray}\mathtt{0}}

{\color{gray}\mathtt{1}} {\color{gray}\mathtt{1}} \phantom{\mathtt{1}}\\

\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1}\mathtt{0}\mathtt{0}\mathtt{1}\\

+ \mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1} \\

\hline

\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{0}

\end{array}\] Etc., I’m getting bored typing all this , out by

hand, so I’m just going to zoom forwards to the result: \[\begin{array}{r}

{\color{gray}\mathtt{1}}{\color{gray}\mathtt{1}}{\color{gray}\mathtt{1}}{\color{gray}\mathtt{1}}

{\color{gray}\mathtt{0}} {\color{gray}\mathtt{1}}

{\color{gray}\mathtt{1}} \phantom{\mathtt{1}}\\

\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1}\mathtt{0}\mathtt{0}\mathtt{1}\\

+ \mathtt{1}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{1}\mathtt{1} \\

\hline

\mathtt{1}\mathtt{1}\mathtt{0}\mathtt{0}\mathtt{0}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{0}

\end{array}\] Translating this binary number back into decimal,

we get: \[\begin{align*}

\mathtt{1}\mathtt{1}\mathtt{0}\mathtt{0}\mathtt{0}\mathtt{0}\mathtt{1}\mathtt{0}\mathtt{0}

&= 1\!\cdot\! 2^8 + 1\!\cdot\! 2^7 + 0\!\cdot\! 2^6 + 0\!\cdot\! 2^5

+ 0 \!\cdot\! 2^4 + 0\!\cdot\! 2^3 + 1\!\cdot\! 2^2 + 0\!\cdot\! 2^1 +

0\!\cdot\! 2^0 \\

&= 2^8 + 2^7 + 4 \\

&= 256 + 128 + 4 \\

&= 388

\end{align*}\] Yay! Here’s that process, visualized:

Procedurally, this works the same way as for adding numbers in decimal. The magic addition machine at the center is inputting and outputting numbers in binary, not decimal, but once we have that magic addition machine (maybe a binary magic addition machine, maybe a decimal magic addition machine), we can add together any two numbers.

So what is this magic black box at the center of this recursive algorithm?!?!? It’s some magic function (or really, two magic functions, one for each output), that:

- takes as input:

- one addend (\(\mathtt{1}\) or \(\mathtt{0}\))

- a second addend (also \(\mathtt{1}\) or \(\mathtt{0}\))

- a third carry/overflow digit (still just \(\mathtt{1}\) or \(\mathtt{0}\))

- beget as output:

- the first digit (to put down for the result for that place)

- the second/carry/overflow digit (to feed into the next step)

… which brings us to the quiz problem.

adding numbers using logic!

Here’s the last problem on our propositional logic quiz:6. Addition! It’s fun! Suppose you want to use logic to add numbers. Can you do it???

In particular, let’s be unambitious, and say you just want to add two numbers, each of which is either \(0\) or \(1\), and so can be represented each with one truth variable. Zero plus zero is zero, zero plus one is one; same with one plus zero; one plus one is two. So can you come up with a way of combining these two numbers-disguised-as-truth-variables that corresponds to addition?? Note that two is not either zero or one, so if you want to use logical functions to add together these two one-bit numbers—“one bit” meaning that they can be represented with a single logical variable—you’ll need two bits for the output—i.e., you need two separate logical functions for the output, one for the \(2^0\) digit of the output, and one that begets the \(2^1\) digit of the output.

This is what we talked about briefly in class on Monday 2/2, and what (in a trickier version) was on the problem set for Monday 2/9!

The key is that we can think of truth variables as representing numbers. Usually we think of them as representing—well—truth-values—but we can equivalently think of them as representing either the number zero, or the number one. That means we can secretly encode numbers in truth variables! We just have to represent them in binary. Say we want to add together two numbers, each of which is either zero or one: \[\begin{align*} 1+1&=2 \\ 1+0 &= 1 \\ 0+1 &= 1 \\ 0 + 0 &= 0 \end{align*}\] In binary, this looks like: \[\begin{align*} \mathtt{1}+\mathtt{1} &=\mathtt{1}\mathtt{0} \\ \mathtt{1}+\mathtt{0} &=\mathtt{0}\mathtt{1} \\ \mathtt{0}+\mathtt{1} &=\mathtt{0}\mathtt{1} \\ \mathtt{0}+\mathtt{0} &=\mathtt{0}\mathtt{0} \end{align*}\] So we can think of this as being already a truth table: we have these two inputs (the two things added together) on the left side of the equation, and then two outputs (the two binary digits/two truth values) on the right side. As a truth table, this might be:| \(P\) | \(Q\) |

second output digit (i.e., \(2^1\) coefficient) |

first output digit (i.e., \(2^0\) coefficient) |

|

|---|---|---|---|---|

| “\(1+1=2\),” i.e. \(\mathtt{1}+\mathtt{1}=\mathtt{10}\) | ||||

| “\(1+0=1\),” i.e. \(\mathtt{1}+\mathtt{0}=\mathtt{01}\) | ||||

| “\(0+1=1\),” i.e. \(\mathtt{0}+\mathtt{1}=\mathtt{01}\) | ||||

| “\(0+0=0\),” i.e. \(\mathtt{0}+\mathtt{0}=\mathtt{00}\) |

Note that I’m using “first” and “second” in the “reading from right to left” sense. The second output digit also often gets called the carry, like how when you’re doing addition by hand and things overflow you carry it over to the next column.

We can fill this in, since we know how to add \(0\) and \(1\), so this is:| \(P\) | \(Q\) |

second output digit (i.e., \(2^1\) coefficient) |

first output digit (i.e., \(2^0\) coefficient) |

|

|---|---|---|---|---|

| “\(1+1=2\),” i.e. \(\mathtt{1}+\mathtt{1}=\mathtt{10}\) | 1 | 1 | 1 | 0 |

| “\(1+0=1\),” i.e. \(\mathtt{1}+\mathtt{0}=\mathtt{01}\) | 1 | 0 | 0 | 1 |

| “\(0+1=1\),” i.e. \(\mathtt{0}+\mathtt{1}=\mathtt{01}\) | 0 | 1 | 0 | 1 |

| “\(0+0=0\),” i.e. \(\mathtt{0}+\mathtt{0}=\mathtt{00}\) | 0 | 0 | 0 | 0 |

But this is just describing our existing knowledge of how to add numbers together in binary. The question is, what ARE those two output functions???? \[\begin{align*} \substack{\text{second output digit}\\\text{i.e., $2^1$ coefficient}} \quad&= \quad ???\\ \\ \substack{\text{first output digit}\\\text{i.e., $2^0$ coefficient}} \quad&= \quad ??? \end{align*}\] Meaning, we know what the ANSWERS are, but what are the logical functions that get us there? How do we take \(P\) and \(Q\), combine them with \(\land\), \(\sim\), \(\lor\), \(\implies\), or whatever, and get those answers?

Let’s look closely. I’ll copy down just \(P\), \(Q\), and the result we want for the \(2^0\) digit:

| \(P\) | \(Q\) |

first output digit (i.e., \(2^0\) coefficient) |

|---|---|---|

| 1 | 1 | 0 |

| 1 | 0 | 1 |

| 0 | 1 | 1 |

| 0 | 0 | 0 |

| \(P\) | \(Q\) |

second output digit (i.e., \(2^1\) coefficient) (aka “carry digit”) |

|---|---|---|

| 1 | 1 | 1 |

| 1 | 0 | 0 |

| 0 | 1 | 0 |

| 0 | 0 | 0 |

Look at that! It’s \(AND\)! \(P\land Q\)!

Let’s summarize:| \(P\) | \(Q\) |

second output digit (i.e., \(2^1\) coefficient) (aka “carry digit”) \(P \land Q\) |

first output digit (i.e., \(2^0\) coefficient) \(P \oplus Q\) |

|

|---|---|---|---|---|

| “\(1+1=2\),” i.e. \(\mathtt{1}+\mathtt{1}=\mathtt{10}\) | 1 | 1 | 1 | 0 |

| “\(1+0=1\),” i.e. \(\mathtt{1}+\mathtt{0}=\mathtt{01}\) | 1 | 0 | 0 | 1 |

| “\(0+1=1\),” i.e. \(\mathtt{0}+\mathtt{1}=\mathtt{01}\) | 0 | 1 | 0 | 1 |

| “\(0+0=0\),” i.e. \(\mathtt{0}+\mathtt{0}=\mathtt{00}\) | 0 | 0 | 0 | 0 |

So we’ve figured out how to build a machine that takes in two numbers from \(0\) to \(1\) and adds them together, using only our logical connectives/truth functions! We just need one logical variable for the output, and then these two different functions, \(\land\) and \(\lor\), give us the two output truth values!

It’s not exactly what we need for our add-any-number-however-big algorithm. For that, we need some magic addition machine that takes three inputs. This one only takes two. I guess it’s just a partial magic addition machine:

(In class I used the name half-adder for it, which is indeed the name most people use. But I think that name gives away too much about how to make a full adder, i.e. the full magic addition machine that takes three inputs. So I’m going to call it a partial magic addition machine instead.)

If we write it as a circuit diagram, exploding the innards, we have something like:

so how to make this magic addition machine?

But how do we make the full magic addition machine?? Or, as I put it on PS #8:Build a full adder! Meaning, can you build a function (or rather, a set of two functions, as we discussed) that takes in three binary digits as input, adds them together, and returns the result (which requires two outputs, one for each digit/place value)?

(Of course you can look up how to do it, just like how you can look up everything/anything for this class, but… don’t! That spoils the fun!

Here’s what we want:

| \(P\) | \(Q\) | \(R\) |

second output digit (i.e., \(2^1\) coefficient) (aka “carry digit”) |

first output digit (i.e., \(2^0\) coefficient) |

|

|---|---|---|---|---|---|

| “\(1+1+1=3\),” i.e. \(\mathtt{1}+\mathtt{1}+\mathtt{1}=\mathtt{11}\) | 1 | 1 | 1 | 1 | 1 |

| “\(1+1+0=2\),” i.e. \(\mathtt{1}+\mathtt{1}+\mathtt{0}=\mathtt{10}\) | 1 | 1 | 0 | 1 | 0 |

| “\(1+0+1=2\),” i.e. \(\mathtt{1}+\mathtt{0}+\mathtt{1}=\mathtt{10}\) | 1 | 0 | 1 | 1 | 0 |

| “\(1+0+0=1\),” i.e. \(\mathtt{1}+\mathtt{0}+\mathtt{0}=\mathtt{01}\) | 1 | 0 | 0 | 0 | 1 |

| “\(0+1+1=2\),” i.e. \(\mathtt{0}+\mathtt{1}+\mathtt{1}=\mathtt{10}\) | 0 | 1 | 1 | 1 | 0 |

| “\(0+1+0=1\),” i.e. \(\mathtt{0}+\mathtt{1}+\mathtt{0}=\mathtt{01}\) | 0 | 1 | 0 | 0 | 1 |

| “\(0+0+1=1\),” i.e. \(\mathtt{0}+\mathtt{0}+\mathtt{1}=\mathtt{01}\) | 0 | 0 | 1 | 0 | 1 |

| “\(0+0+0=0\),” i.e. \(\mathtt{0}+\mathtt{0}+\mathtt{0}=\mathtt{00}\) | 0 | 0 | 0 | 0 | 0 |

But how do we actually make this? Staring at the truth table trying to figure out what logical functions will work doesn’t seem like a great plan. It seems… hard.

What if we try using the smaller, partial magic addition

machines we already have? Those ones take in only two one-digit numbers.

But that’s a start. What if we use two of the partial magic addition

machines? We could add \(p\) and \(q\) using one of the partial magic addition

machines, then take the result, and use a second partial magic addition

machine to add it to \(r\). Addition,

after all, is fundamentally binary. There’s no such thing as

adding three numbers together; there’s only adding two numbers together:

\[\underbrace{p+q+r}_{\mathclap{\text{this

doesn't actually exist!}}} \quad=\quad

\underbrace{(p+q)+r}_{\mathclap{\text{this does}}}\] Or in syntax

tree form: Put differently, perhaps we should think of this

as two smaller additions: \[\text{the first

addition: }\quad p + q\] \[\text{the

second addition: }\quad \left(\substack{\text{whatever $p$}\\\text{plus

$q$ was}}\right) + r\] The tricky thing is how to deal with the

carry. The

half-adder partial magic addition machine tells us

how to add two one-bit/one-digit numbers together, but the result is two

bits/two digits. The naiive way to string together two half-adders is:

But this can’t be what we want. It gives us THREE

outputs. It’s not clear how we deal with the fact that we have a carry

from both the half-adders… how do we deal with that when we add \(r\)?

Let’s imagine that we start by adding \(p\) and \(q\), using our partial magic addition machine, and that gives us the number \(d_1d_0\) as output. Here I mean that \(d_1\) and \(d_0\) are the digits of the output number; I don’t mean that we’re multiplying together \(d_1\) and \(d_0\). So \(d_0\) is the \(2^0\) digit and \(d_1\) is the carry/\(2^1\) digit. (If you’re reading this closely, note that I changed the indexing—in class I called this \(d_2d_1\). But this way the indexing matches the power of \(2\)!)

Note that \(d_1d_0\) is at most \(2\), i.e. \(\mathtt{10}\): \[d_1d_0 \text{ can be } \left\{ \begin{matrix}\mathtt{10},\\\mathtt{01},\\ \text{or } \mathtt{00} \end{matrix} \right\}\] Put differently, \(d_1\) and \(d_0\) can’t both be \(1\). They can both be \(\mathtt{0}\), or one can be \(\mathtt{1}\), but we can’t have both of them be one: \[d_1d_0 \text{ CAN'T be }\mathtt{11}\] (This is vaguely relevant later.)

So let’s imagine we’re somehow trying to add the number \(d_1d_0\) to \(r\). (\(r\), meanwhile, is one-bit/one digit).

Ignoring how this works in terms of logic gates, and thinking just back

to elementary-school multiplication, we have: \[\begin{array}{r}

d_1d_0 \\

+ r\\

\hline \\

\end{array}\] I’ll list all of the six concrete possibilities for

what this step could look like. (This might or might not be helpful;

ignore if it’s too much information.) \[\begin{array}{r}

\mathtt{10} \\

+ \mathtt{1}\\

\hline\\

\end{array}\hspace{1cm}

\begin{array}{r}

\mathtt{01} \\

+ \mathtt{1}\\

\hline\\

\end{array}\hspace{1cm}

\begin{array}{r}

\mathtt{00} \\

+ \mathtt{1}\\

\hline\\

\end{array}\hspace{1cm}

\begin{array}{r}

\mathtt{10} \\

+ \mathtt{0}\\

\hline\\

\end{array}\hspace{1cm}

\begin{array}{r}

\mathtt{01} \\

+ \mathtt{0}\\

\hline\\

\end{array}\hspace{1cm}

\begin{array}{r}

\mathtt{00} \\

+ \mathtt{0}\\

\hline\\

\end{array}\] Let’s try to do this addition! We had: \[\begin{array}{r}

d_1d_0 \\

+ r\\

\hline \\

\end{array}\] The first step is to add \(d_0\) and \(r\). Those are just two bits/two digits, so

it just takes a single partial magic addition machine. So we have

something like: So we add these, and we get either \(\mathtt{00}\), \(\mathtt{01}\), or \(\mathtt{10}\). (Like in the previous step,

we can’t get \(\mathtt{11}\), because

our inputs are at most both \(\mathtt{1}\).) Let’s call the first digit

of the output \(s_0\). We might or

might not need to carry a \(\mathtt{1}\). Let’s say we do. Actually,

let’s be more general, and call the carry/overflow just \(c\) (which could be \(0\)). So then our diagram looks like:

Here’s what we’ve done

elementary-school-addition-wise: \[\begin{array}{r}

{\color{gray} c} \phantom{{}_2d_1} \\

d_1d_0 \\

+ \quad\quad r\\

\hline

s_0

\end{array}\] If you want to see all six possibilities so far

listed out, we have: \[\begin{array}{r}

{\color{gray} \mathtt{0}} \phantom{\mathtt{0}} \\

\mathtt{10} \\

+ \mathtt{1}\\

\hline

\mathtt{1}

\end{array}\hspace{1cm}

\begin{array}{r}

{\color{gray} \mathtt{1}} \phantom{\mathtt{1}} \\

\mathtt{01} \\

+ \mathtt{1}\\

\hline

\mathtt{0}

\end{array}\hspace{1cm}

\begin{array}{r}

{\color{gray} \mathtt{0}} \phantom{\mathtt{0}} \\

\mathtt{00} \\

+ \mathtt{1}\\

\hline

\mathtt{1}

\end{array}\hspace{1cm}

\begin{array}{r}

{\color{gray} \mathtt{0}} \phantom{\mathtt{0}} \\

\mathtt{10} \\

+ \mathtt{0}\\

\hline

\mathtt{0}

\end{array}\hspace{1cm}

\begin{array}{r}

{\color{gray} \mathtt{0}} \phantom{\mathtt{1}} \\

\mathtt{01} \\

+ \mathtt{0}\\

\hline

\mathtt{1}

\end{array}\hspace{1cm}

\begin{array}{r}

{\color{gray} \mathtt{0}} \phantom{\mathtt{0}} \\

\mathtt{00} \\

+ \mathtt{0}\\

\hline

\mathtt{0}

\end{array}\] Then, for the next step, we need to add \(c\) and \(d_1\): \[\begin{array}{r}

{\color{gray} c} \phantom{{}_2d_1} \\

d_1d_0 \\

+ \quad\quad r\\

\hline

s_0

\end{array}\] But this, too, only requires a partial magic

addition machine! There are only two digits we need to add! \(r\) is just one bit, so we don’t have a

third digit to add! We can do this! If we’re deeper in our

multiplication algorithm, we might need to add three things

together, but in the first step, we only need to add two things

together. So we can use our existing partial magic addition machine

technology! Let’s pop in a THIRD partial magic addition machine!!!

But here’s the fun insight: the carry from this

third partial magic addition machine will always be \(0\)! So we can just ignore it! And the

output of this third partial magic addition machine, along, gives us the

final/second digit of the result!!!

Why must the carry be \(0\)?

- If we’re carrying something, that means the answer from the previous, \(d_0+r\) step, was \(\mathtt{10}\)

- i.e. \(d_0\) and \(r\) were both \(\mathtt{1}\)

- but if \(d_0\) was \(\mathtt{1}\), then \(d_1\) has to be \(\mathtt{0}\). (\(d_1d_0\) can’t be equal to \(\mathtt{11}\); the biggest it can be is \(\mathtt{11}\).)

- so if \(d_1\) is \(\mathtt{0}\), then regardless of what \(c\) is, the biggest \(d_1+c\) can be is \(\mathtt{01}\).

(HW problem: prove this symbolically!)

So then our full computation, in its elementary-school-arithmetic

form, looks like: \[\begin{array}{r}

{\color{gray} c \phantom{{}_2d_1}} \\

d_1d_0 \\

+ \quad\quad r\\

\hline

s_1 s_0

\end{array}\] Actually, I’ll “carry” a zero just to emphasize

that no matter what, we always carry a zero: \[\begin{array}{r}

{\color{gray} \mathtt{0} c \phantom{{}_2d_1}} \\

d_1d_0 \\

+ \quad\quad r\\

\hline

s_1 s_0

\end{array}\] Here are its six concrete instantiations: \[\begin{array}{r}

{\color{gray} \mathtt{0}} \phantom{\mathtt{0}} \\

\mathtt{10} \\

+ \mathtt{1}\\

\hline

\mathtt{11}

\end{array}\hspace{1cm}

\begin{array}{r}

{\color{gray} \mathtt{1}} \phantom{\mathtt{1}} \\

\mathtt{01} \\

+ \mathtt{1}\\

\hline

\mathtt{10}

\end{array}\hspace{1cm}

\begin{array}{r}

{\color{gray} \mathtt{0}} \phantom{\mathtt{0}} \\

\mathtt{00} \\

+ \mathtt{1}\\

\hline

\mathtt{01}

\end{array}\hspace{1cm}

\begin{array}{r}

{\color{gray} \mathtt{0}} \phantom{\mathtt{0}} \\

\mathtt{10} \\

+ \mathtt{0}\\

\hline

\mathtt{10}

\end{array}\hspace{1cm}

\begin{array}{r}

{\color{gray} \mathtt{0}} \phantom{\mathtt{1}} \\

\mathtt{01} \\

+ \mathtt{0}\\

\hline

\mathtt{01}

\end{array}\hspace{1cm}

\begin{array}{r}

{\color{gray} \mathtt{0}} \phantom{\mathtt{0}} \\

\mathtt{00} \\

+ \mathtt{0}\\

\hline

\mathtt{00}

\end{array}\] And our circuit diagram looks like: Actually, we can strip this down further. A

partial magic addition machine is really just two logical functions,

\(\oplus\) for the first bit/digit and

\(\land\) for the second:

But we don’t care about the second

digit/bit/carry, so we can just delete that, and just think of this as a

final \(\oplus\):

So that’s our full magic addition machine!!!!

an excuse to verify

From PS#9:

In class I showed how I built the full adder by wiring together three half-adders, and then we simplified the last half-adder to just an XOR gate (XOR’ing the carries from the two half-adders). Brendan said that he had just used an OR gate for that purpose; Luca pointed out that it’ll be equivalent, because even though XOR and OR are different functions, they’re only different when their inputs are both true/both \(1\), and that will never happen (the carries will never both be \(1\)).

Can you prove this symbolically??? I don’t mean “argue linguistically why the carries can never both be \(1\)” (as we did in class, and as I do in the notes); I mean, using the formulas for these two carries, prove, symbolically, that they can never both be \(1\)!!! You could do a Quine-style analysis, you could do a truth table… just do it symbolically, with no reference to the external meaning of what we’re trying to do in building this adder!

adding two big numbers together!

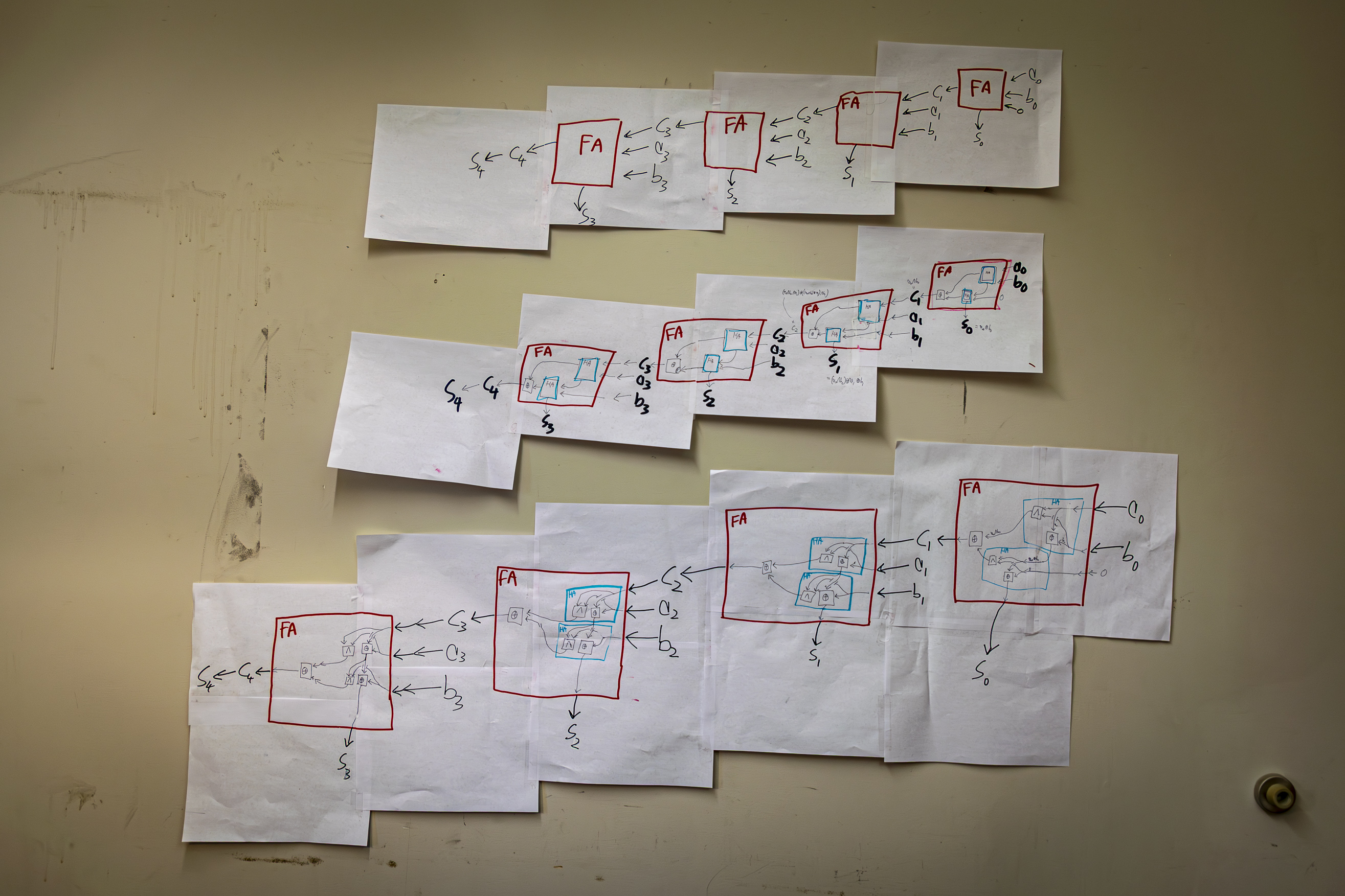

From PS#9:As the main followup to building a full adder: suppose you want to add together the numbers \(a_3a_2 a_1 a_0\) (where each of the \(a_i\) is one of the digits; we’re concatenating them not multiplying them) and \(b_3 b_2 b_1 b_0\). Can you do it using a bunch of full adders?!?! \[\begin{array}{r} a_3a_2a_1a_0 \\ + \quad b_3b_2b_1b_0 \\ \hline\\ \end{array}\] (Note I start the indexing/subscripts at \(0\), to match the corresponding powers of \(2\).) A few specific sub-problems. First, can you come up with formulas for each of the resulting digits? As we sort of saw, they get more and more complicated the further out you get. In other words, if the addition is: \[\begin{array}{r} a_3a_2 a_1 a_0 \\ + \quad b_3 b_2 b_1 b_0 \\ \hline s_4s_3s_2s_1s_0 \end{array}\] Can you come up with formulas for \(s_0\), \(s_1\), \(s_2\), and so forth? Here, I’ll even be generous and give you the first answer: \(s_0 = a_0 \oplus b_0\)! You’re welcome.

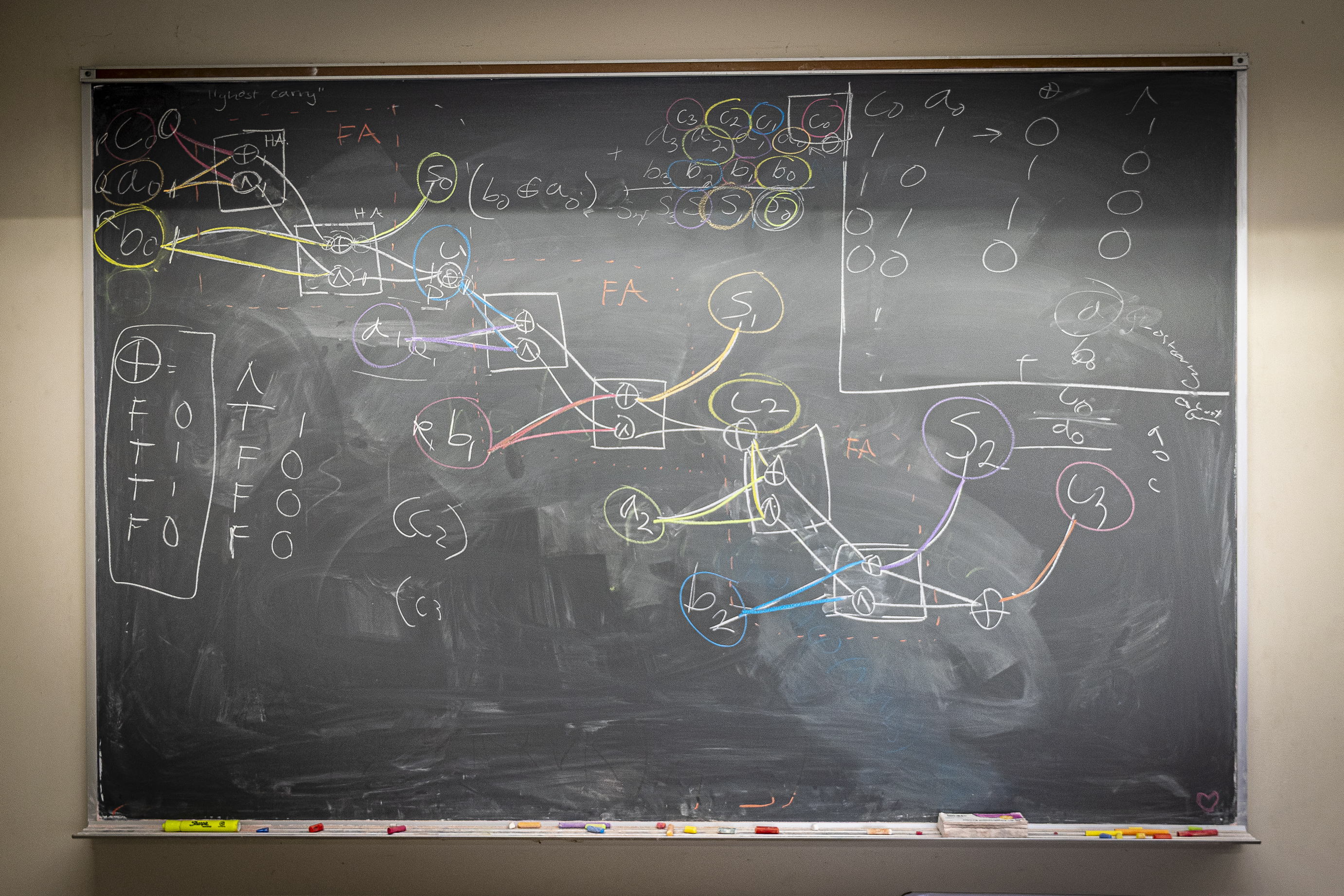

Second, can you actually draw out this network of adders? I am envisioning something like what Brendan (bless him) drew on the board at the end of class, but more clear. Brendan’s diagram was beautiful but its disadvantage was that it didn’t make it clear (at least to me) what the original numbers he was adding were. But I think this fixes that! What I want you to do is draw out the full network, down to just the AND and XOR gates (“gates” is the name people in electronics usually give to logical functions), but also showing the half-adders and the full-adders as well. (For notational consistency, and so it’s easier for us to cross-check, perhaps outline all the half-adders in blue, and the full adders in red?) You could start by writing out a big network of full adders and then expanding; you could try bottom-up and start with just AND and XOR gates (which it seems is what Brendan did?)

(If any of you in electronics have the components to build simple logic gates, feel free to do so as well!)

Here’s what I showed us in class (and which is now tacked up on the wall of the visiting faculty office): my attempt to sketch out this circuit, in three different levels of granularity. The top schematic is just a system of four full adders/full magic addition machines strung together; the middle is the same thing, but with each of those full adders x-rayed into its constituent two half-adders and XOR; finally, the bottom is the full expansion into just XOR and AND gates:

Perhaps, to continue this whimsical naming scheme, we should call this a magic addition monster. (But most people would call this a multi-bit adder, or perhaps a four-bit adder).

With infinite space and patience, I’d sketch out the formulas for each output and each input. But, lacking that, I just came up with the formulas for the final digits. To do so, I first wrote out the formulas for what’ll come out of the \(n\)th adder in a sequence. So I’m imagining a full adder with inputs \(a_n\) and \(b_n\), as well as an incoming carry \(c_n\), and outputs \(s_n\) (the digit we put down) and \(c_{n+1}\) (the next carry). By tracking the internal details of what happens (not shown), we get these formulas:

So then we can recursively figure out the formulas for each of the digits.

Coming out of the first full adder, we have the first digit we put down \(s_0\), and the first carry/overflow digit, \(c_1\): \[\begin{align*} s_0 &= a_0 \oplus b_0 \\ c_1 &= a_0 \land b_0 \end{align*}\]

Then, the output of the second full adder is: \[\begin{align*} s_1 &= ( a_1 \oplus b_1) \oplus c_1 \\ &= ( a_1 \oplus b_1) \oplus ( a_0 \land b_0) \\ \\ c_2 &= ( a_1 \land b_1 ) \oplus \bigl( ( a_1 \oplus b_1) \land c_1 \bigr) \\ &= ( a_1 \land b_1 ) \oplus \bigl( ( a_1 \oplus b_1) \land ( a_0 \land b_0) \bigr) \end{align*}\]

Plugging that into the next full adder, we get, as the output of our third full adder: the output of the third full adder: \[\begin{align*} s_2 &= ( a_2 \oplus b_2) \oplus c_2 \\ &= ( a_1 \oplus b_1) \oplus \bigl( ( a_1 \land b_1 ) \land ( a_1 \oplus b_1) \land ( a_0 \land b_0 ) \bigr) \\ \\ c_3 &= ( a_2 \land b_2 ) \oplus \bigl( ( a_2 \oplus b_2) \land c_2 \bigr) \\ &= ( a_2 \land b_2 ) \oplus \Bigl( ( a_2 \oplus b_2) \land \bigl( ( a_1 \land b_1 ) \oplus \bigl( ( a_1 \oplus b_1) \land ( a_0 \land b_0) \bigr) \bigr) \Bigr) \\ \end{align*}\]

Finally, we plug things into the last full adder, and get: \[\begin{align*} s_3 &= ( a_3 \oplus b_3 ) \oplus c_3\\ &= ( a_3 \oplus b_3 ) \oplus ( a_2 \land b_2 ) \oplus \Bigl( ( a_2 \oplus b_2) \land \bigl( ( a_1 \land b_1 ) \oplus \bigl( ( a_1 \oplus b_1) \land ( a_0 \land b_0) \bigr) \bigr) \Bigr) \\ \\ c_4 = s_4 &= ( a_3 \land b_3) \oplus \bigl( ( a_3 \oplus b_3 ) \land c_3 \bigr) \\ &= ( a_3 \land b_3) \oplus \Biggl( ( a_3 \oplus b_3 ) \land ( a_2 \land b_2 ) \oplus \Bigl( ( a_2 \oplus b_2) \land \bigl( ( a_1 \land b_1 ) \oplus \bigl( ( a_1 \oplus b_1) \land ( a_0 \land b_0) \bigr) \bigr) \Bigr) \Biggr) \\ \end{align*}\]

Monster indeed! What a fright! Note how, even though we need all these carry digits for the intermediate steps: \[\begin{array}{r} {\color{gray} c_4c_3c_2c_1\,\phantom{a_0}} \\ a_3a_2a_1a_0 \\ +\quad b_3b_2b_1b_0 \\ \hline s_4s_3s_2s_1s_0 \end{array}\] they’re all gone from our final answer! This makes sense—our final answer should only be a function of the \(a_i\) and \(b_i\) digits—but it’s still cool to see.

adding four numbers together!

From PS#10:

Brendan calls up your adding-machine company. He demands a magic addition machine that takes in four one-digit numbers and outputs their sum. However, you only have the usual addition machines in stock: the partial magic addition machine (which takes in two one-digit numbers) and the full magic addition machine (which takes in three). Can you still build him what he wants? Do you have to go all the way back to the drawing board? In a sense this is a repeat of problem #4 on PS#7… but now we’re better equipped!

Brendan and Tahm had this question after class. I realized, after I worked it out, that it was the perfect followup question. It’s not too hard, but nor is it totally trivial; building this device requires the same thought processes that we needed to build a full adder (modularity! splitting up big tasks into smaller tasks!) without repeating them verbatim.

Here’s the computation we want to do: \[\begin{array}{r} p \\ q \\ r \\ +\,\,\quad s\\ \hline \\ \end{array}\] We have four one-digit/one-bit numbers, so they’re all either \(\mathtt{1}\) or \(\mathtt{0}\) (either in binary or in decimal!), so when we add them up, their sum must be between \(\mathtt{0}\) and \(\mathtt{100}\) (i.e., in decimal between \(0\) and \(4\)). So that means we’ll need three one-digit/one-bit values to store the sum. So the computation, at the end, will look like: \[\begin{array}{r} p \\ q \\ r \\ +\quad s\\ \hline s_2s_1s_0 \end{array}\] Maybe it’s bad to re-use the letter \(s\); I mean no connection between the \(s\) that’s one of the numbers we’re adding up and the \(s_i\) that are the digits of the sum. Oh well!

But how do we do this computation? We’ve figured out how to add

together two four-digit numbers—but here we want to add together four

one-digit numbers. That’s different, because all of these numbers/digits

have the same place value. So we can’t just wire together the same

multi-bit adder magic addition monster we built to add \(a_3a_2a_1a_0\) and \(b_3b_2b_1b_0\). But we can use some of the

same principles!

What do we have to work with? We have our full adder full

magic addition machine, which adds together three one-digit numbers. So

let’s use a full magic addition machine to add three of our four

numbers, take the result, and then add that together with the fourth

number.

OK, let’s start. Let’s add together three of the four numbers. We’ll

add \(p\), \(q\), and \(r\), like so: \[\begin{array}{r}

p \\

q \\

+\quad r \\

\hline \\

\end{array}\] We have three digits/numbers to add, so we can do

this using a full adder/a full magic addition machine! We’ll get some

resulting sum in the \(2^0\) place,

which we’ll call \(d_0\), and then

carry over some overflow \(d_1\) into

the \(2^1\) place. Our computation will

look like: \[\begin{array}{r}

{\color{gray} d_1}\phantom{p} \\

p \\

q \\

+\quad r \\

\hline

d_1d_0

\end{array}\] Schematically, this will look like: Now we need to

somehow add \(s\) to this. We have

three digits, \(d_1\), \(d_0\), and \(s\), but we can’t just add them all

together with another full adder—they have different place values! \(d_0\) and \(s\) are both in the \(2^0\) place, but \(d_1\) is in the \(2^1\) place. In other words, the numbers we

need to add right now are: \[\begin{array}{r}

d_1d_0 \\

+\quad s \\

\hline \\

\end{array}\] So really, we just want to add \(s\) and \(d_0\) together… and then figure out what to

do with \(d_1\). So first we just want

to do this computation: \[\begin{array}{r}

d_0 \\

+\quad s \\

\hline \\

\end{array}\] We might have a carry from this? Let’s call the

carry \(e_1\) (we’ve already used the

letter \(d\) for the carries from the

first adder). Also, whatever value we get from adding \(d_0\) and \(s\) will be our final answer for the \(2^0\) digit for the full sum! We just have

to propogate the carries through. (We ripple them

through—that’s the verb that gets used a lot.) So \(s_0\) will be the sum digit in the \(2^0\) place: \[\begin{array}{r}

{\color{gray} e_1} \phantom{d_0} \\

d_0 \\

+\quad s \\

\hline

s_0

\end{array}\] OK, so we can do all this with a half-adder/partial

magic addition machine! If we add that to our schematic, we have:

Yay! So we still

have these two seperate carries to deal with, \(d_1\) and \(e_1\). But that’s just another computation

we can do with a half-adder/partial magic addition machine! We’ll have

two inputs, and then we’ll get two outputs—the first will become \(s_1\), and the second/the carry will become

\(s_2\)! So we have something that

looks like:

If

you want the formulas for the outputs, plus the intermediate steps, they

work out to be:

keevan’s four-digit adder

The four-digit adder I built above is the same as what Sasha and a few others (sorry for forgetting whom!) built. Keevan, however, built something different:

(Note that I’ve drawn these adders with the same visual symbolism as the rest of these diagrams—with inputs coming from the right, the lowest-place-value digit coming out the bottom, and the carry/next-higher-place-value-digit coming out the left.)

Keevan’s circuit seems plausible! But can you prove that it gives the same results as my/Sasha’s four-digit adder? In other words, can you (a) come up with the formulas for each of the three output digits, and then (b) prove that these formulas are equivalent to the corresponding formulas for the four-digit adder that Sasha and I built? By “prove” I mean in a purely logical and symbolic way, without using any of your understanding of how multiplication or addition works, and only using your knowledge of how AND and XOR work.

multiplication, revisited

Brian Hill, DS’s long-term natural sciences professor, who’s also teaching an Analog Electronics class this semester, wandered into my office near the end of term. We talked about logic gates, the complicated-more-mechanical-no-longer-taught algorithms he learned for long division as a kid, and his attempt at one point to work through, for fun, the schematics and technical manual for the HP-35 (the first commercially successful scientific calculator). Inspired by the conversation I realized the perfect midterm exam would be to have the kids wire up a multiplier. But first I had to make sure that the kids remembered to do the elementary-school multiplication algorithm. So, from PS#12:

Multiplication!!! It’s so fun. A proper pasttime for promising young things. Multiply the following numbers together, algorithmically, by hand, using the classic elementary-school algorithm we reminded ourselves of today in class (the left two are in decimal; the right two are in binary): \[\begin{array}{r} 47 \\ \times \quad 98 \\ \hline\\ \end{array} \hspace{1cm} \begin{array}{r} 345{,}648 \\ \times \quad 92{,}905 \\ \hline\\ \end{array} \hspace{1cm} \begin{array}{r} \mathtt{11010} \\ \times \quad \mathtt{101} \\ \hline\\ \end{array} \hspace{1cm} \begin{array}{r} \mathtt{10011} \\ \times \quad \mathtt{1101} \\ \hline\\ \end{array}\] Check them afterwards—both for reasonability, and because arithmetic is hard—and with the binary ones, only convert them to decimal after you’ve done the full computation. (Do it natively in binary! Become a BEING OF BINARY!!!)

To my embarrassment I had to watch a video reminding me how to do the elementary-school long multiplication algorithm.

why all the XOR’ing?

From PS#12:

In all of the various adders we’ve built—the half-adder, the full adder, the giant four-digit adder, the weird four-one-digit adder—the smallest digit has always had a very simple formula. It’s always just been some things XOR’d together. With the half adder that digit was \(p\oplus q\); with the full adder it was \(p\oplus q \oplus r\); with the weird four-digit adder it was \(p \oplus q \oplus r \oplus s\). Why? With all the successive digits, the formulas get more and more complicated. But with this initial digit—even if we’re adding really big numbers, or a lot of numbers—it’s always so simple. Why?

magic multipliers

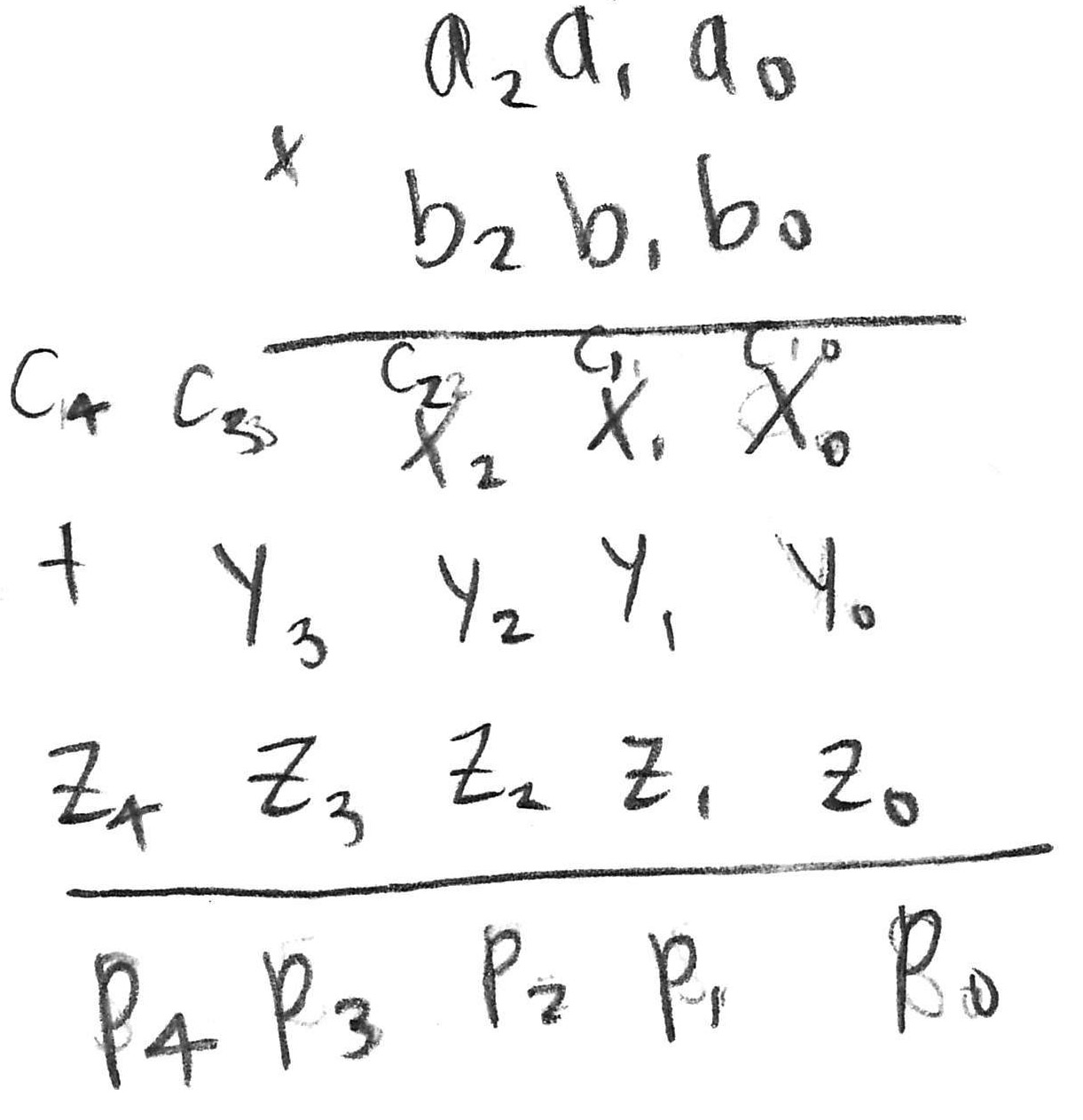

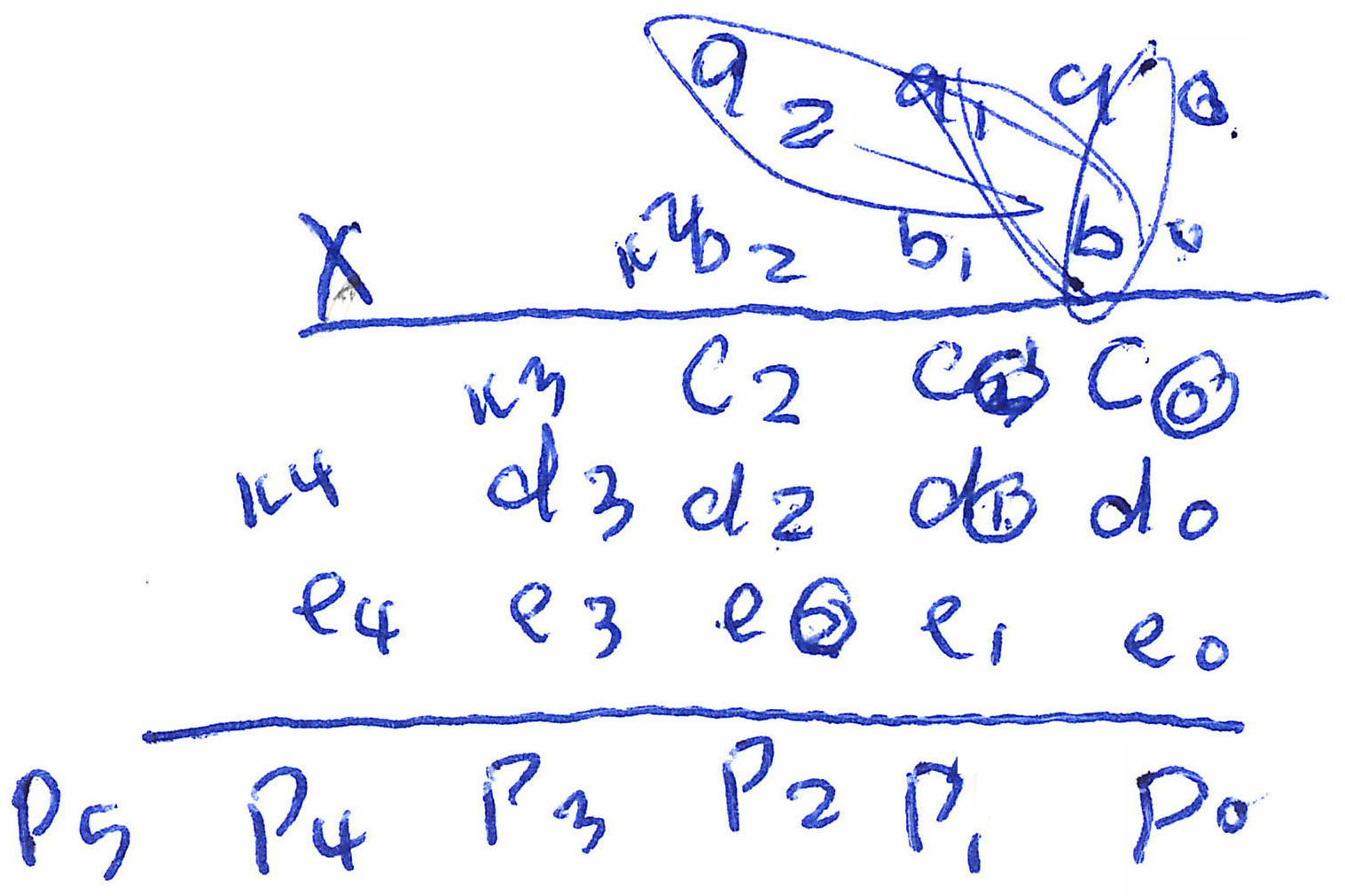

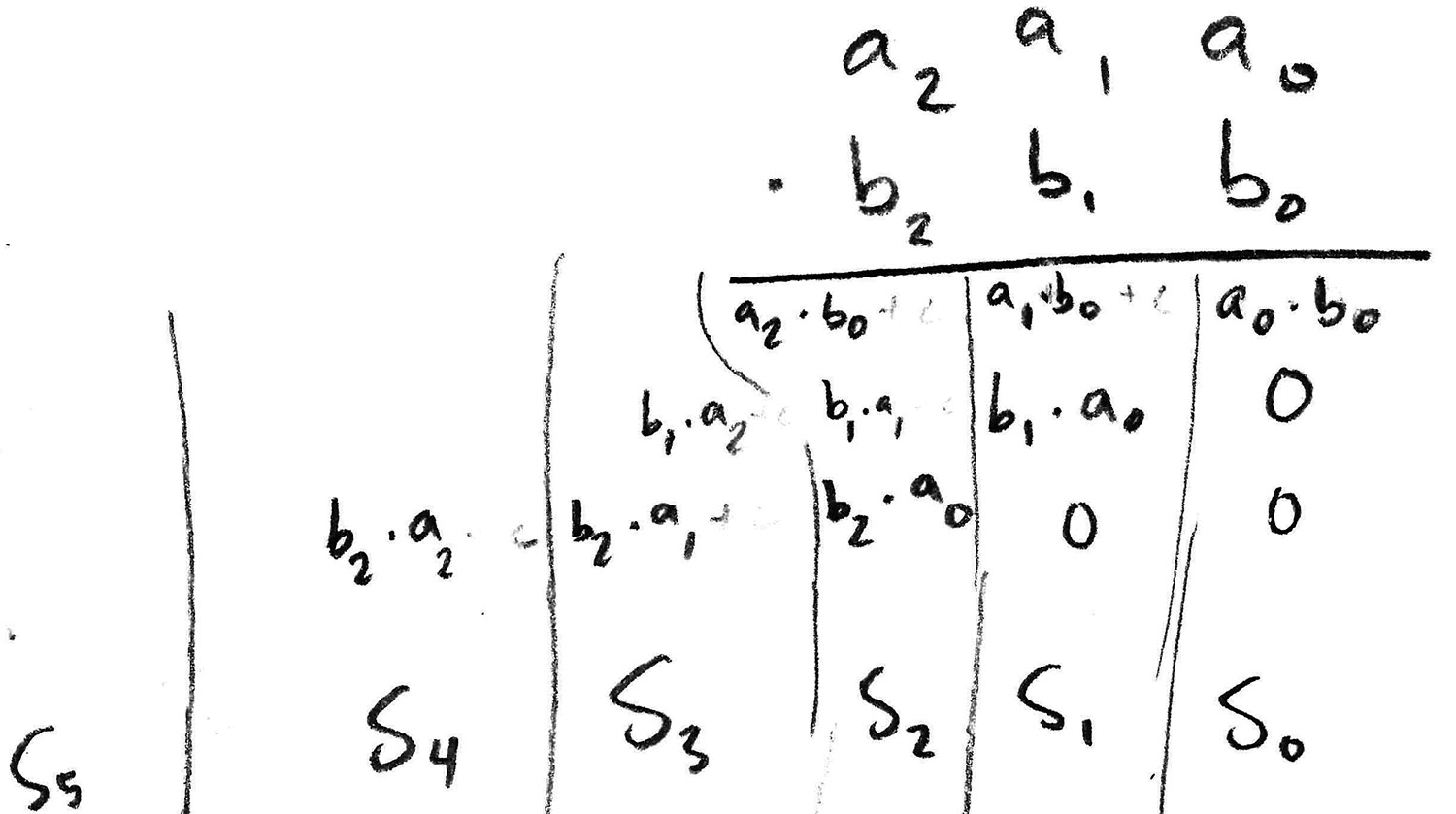

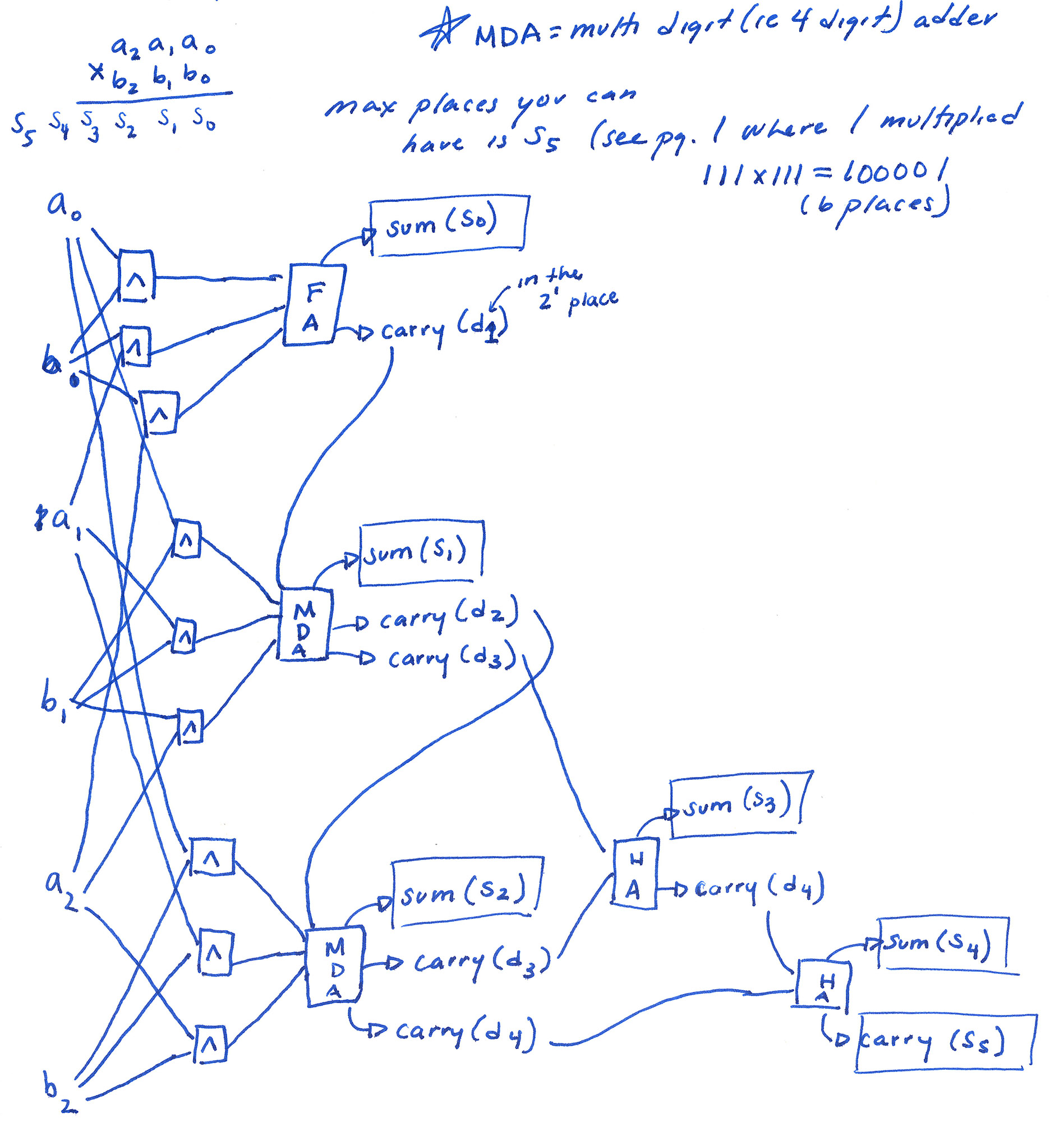

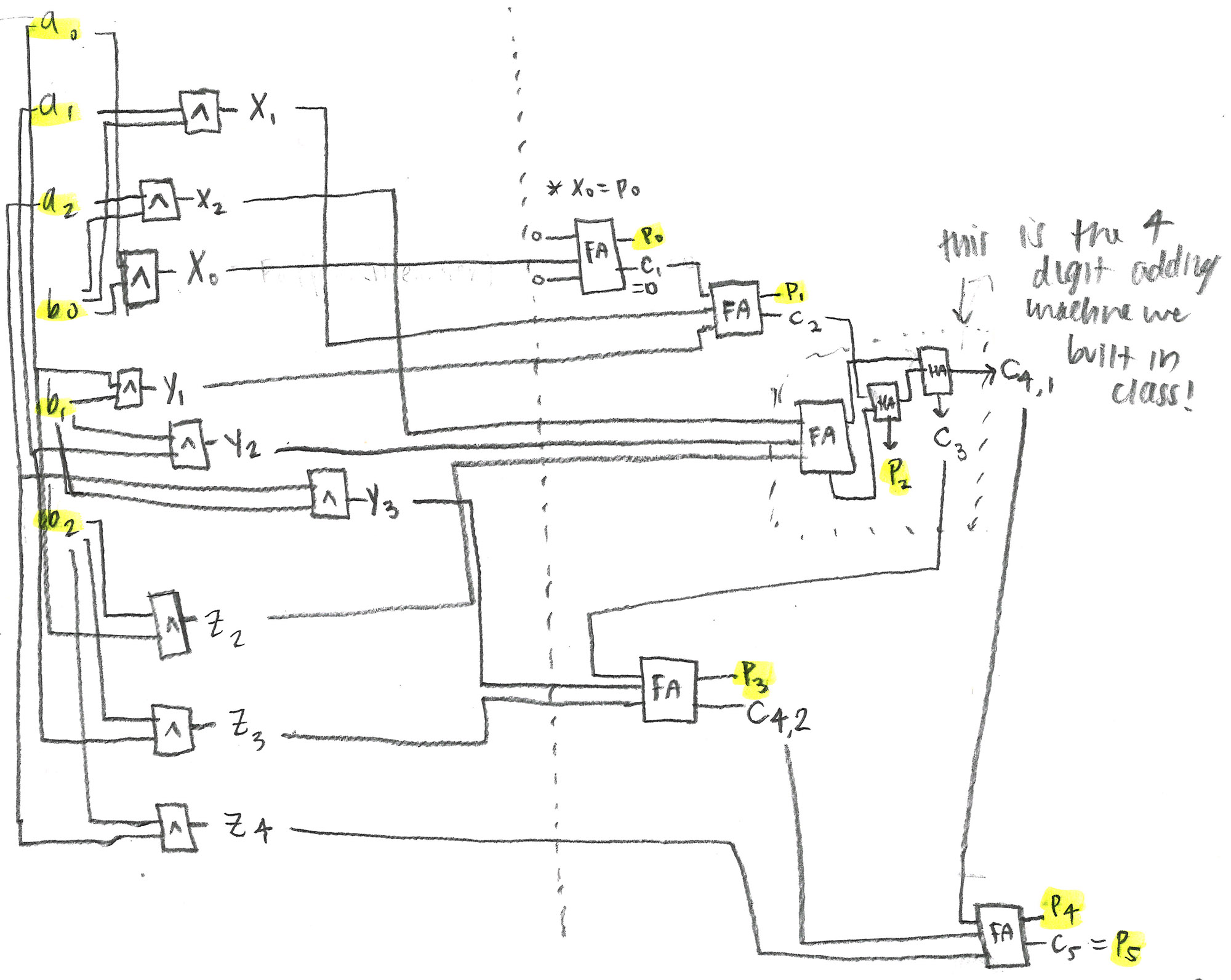

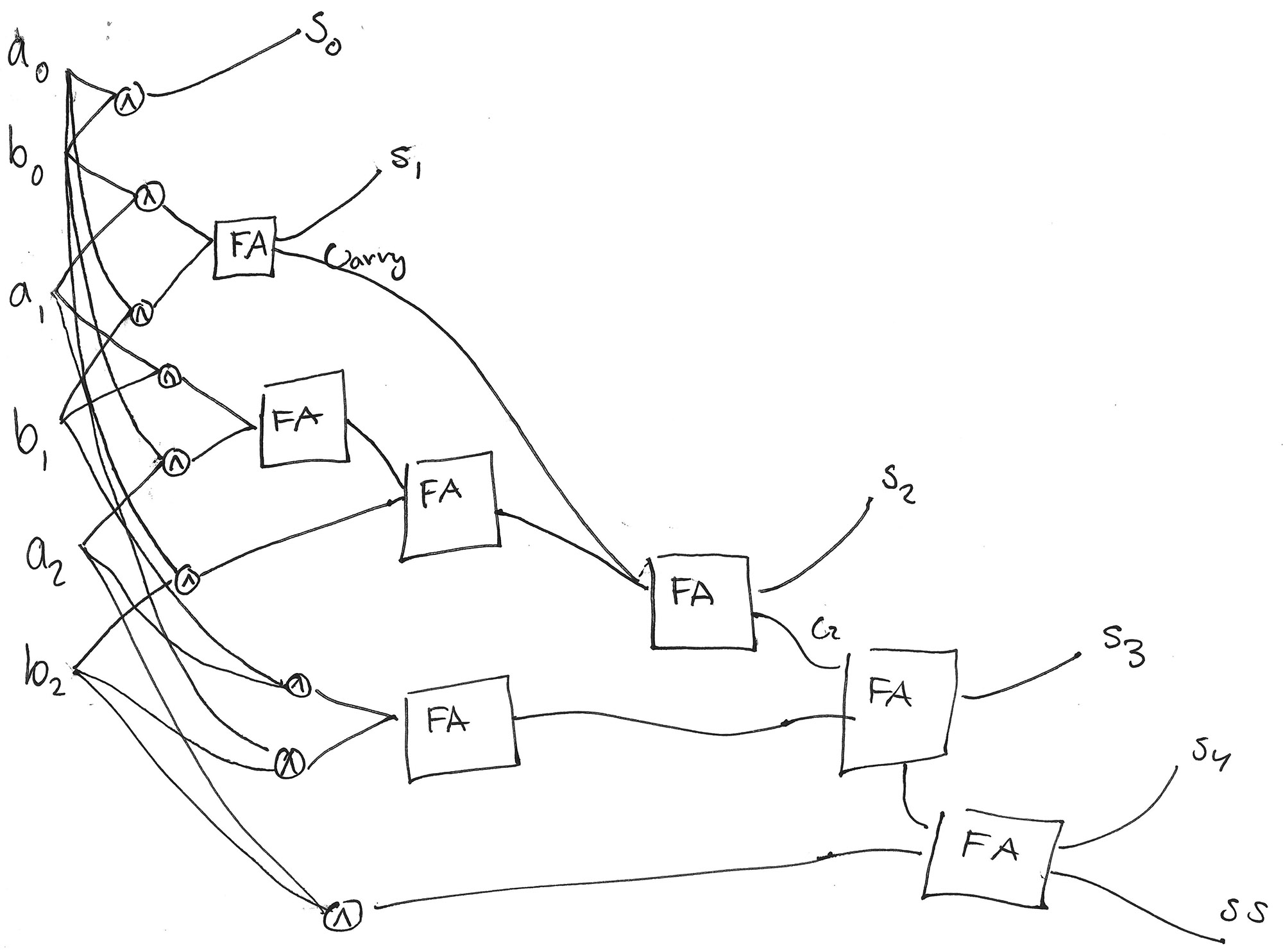

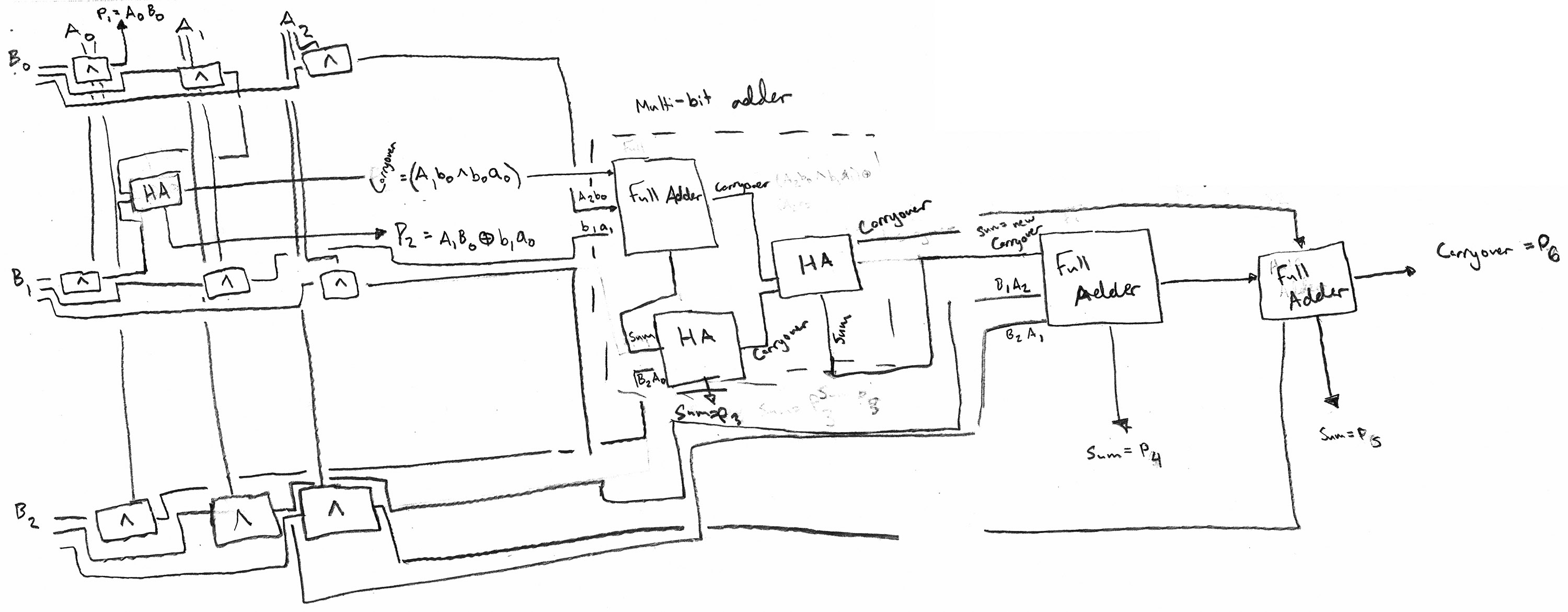

The sole problem on our midterm, Friday, February 27th:Can we use logic to multiply numbers together?!? In particular, suppose we have two three-digit binary numbers, and we multiply them together: \[\begin{array}{r} a_2a_1a_0 \\ \times \quad b_2b_1b_0 \\ \hline \\ \end{array}\] Can you build a magic multiplication machine that will take as input these two binary numbers (so six binary inputs in total) and return the resulting digits of the product??? Feel free to re-use any of the magic arithmetic machines we’ve built so far. But do show as much detail as possible, and explain your thought process as clearly and thoroughly as you can (using words, sentences, pictures, diagrams, equations, truth tables… your full intellectual arsenal!)

Feel free to use you notes; don’t otherwise look stuff up; don’t work with each other! I’m excited to see how far you get on this problem. Even if you don’t figure it out, I hope it gives you something to chew on over break!

I was exceedingly pleased with how this went. I thought a few students would figure it out and a few wouldn’t, but basically everyone built a working multiplier—many spread across multiple 8.5x11 pages taped together.

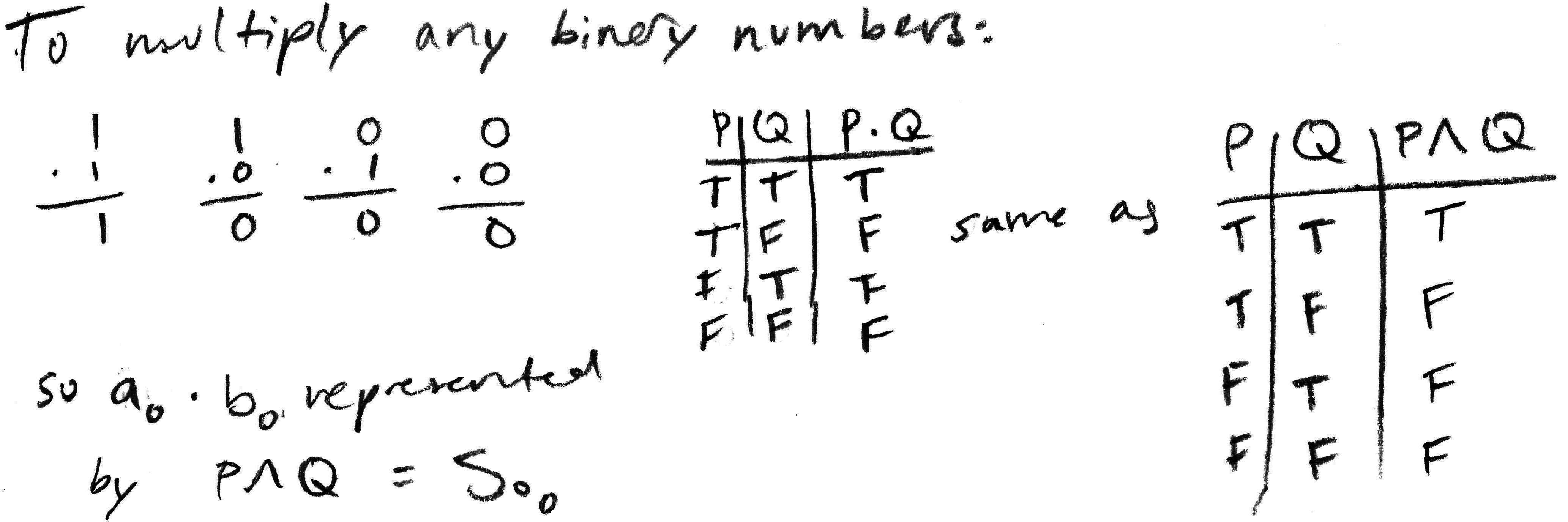

Single-digit multiplication in binary is easy: one times one is one; zero times anything is zero! No carries! It works out to be just logical AND:

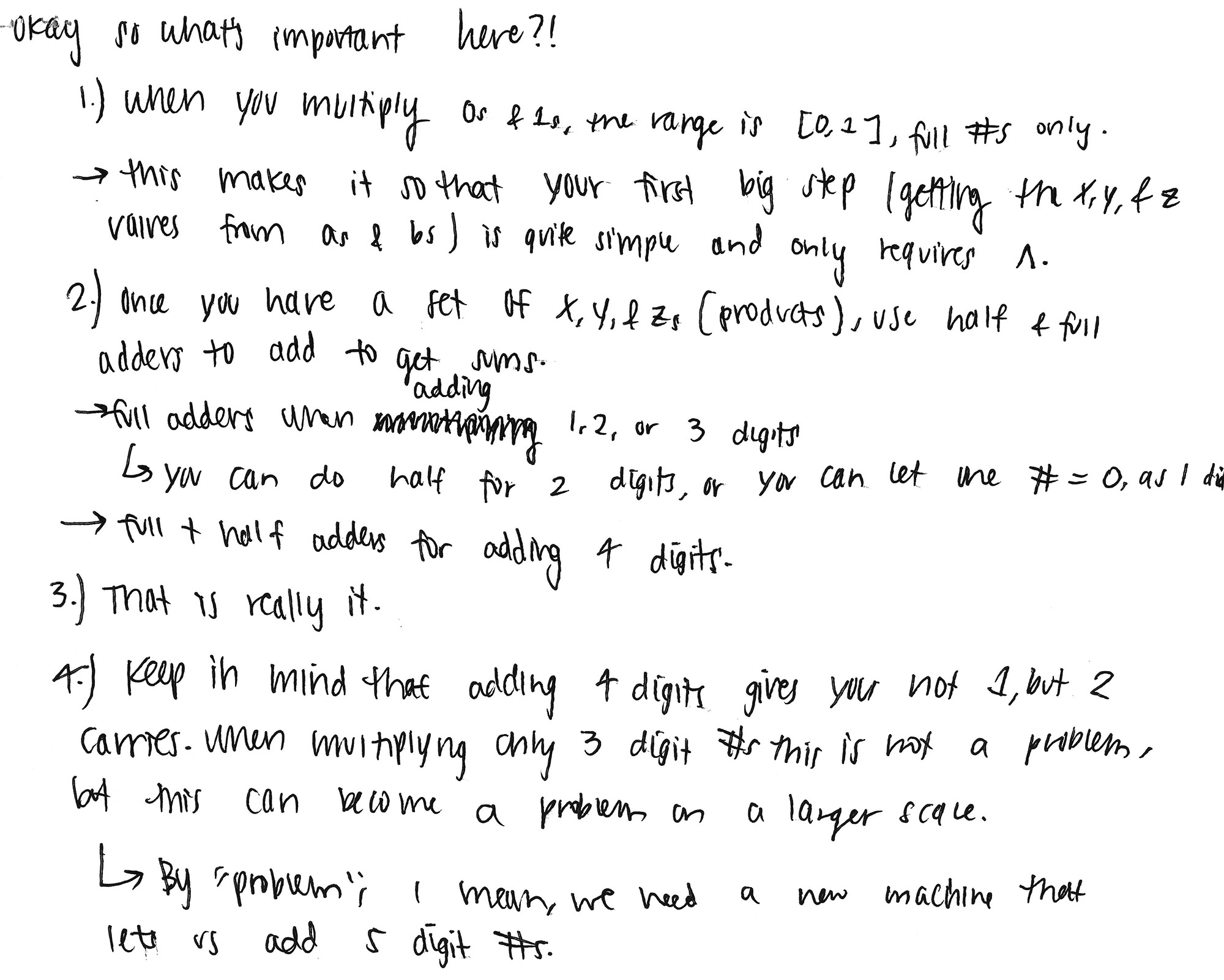

When we multiply multi-digit/multi-bit numbers, we get a bunch of intermediate things to add, looking something like:

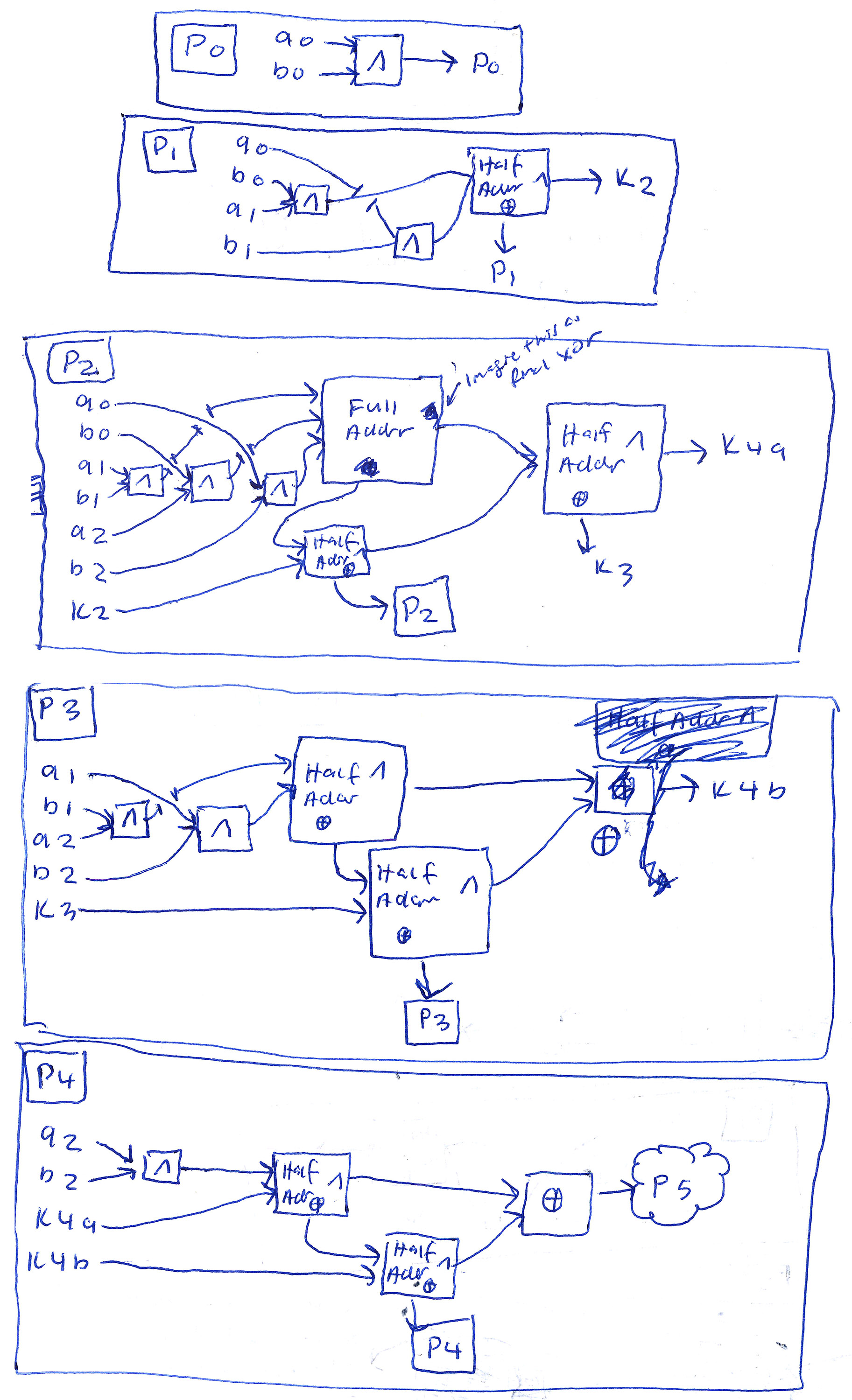

So the full algorithm for multiplying together multi-bit numbers consists of just adding together bit-shifted versions of one of the original multiplicands. As one of the kids put it on the last page:

Here are some of the actual multiplication circuits the kids came up with. (I’m not showing all of their scratch work or drafts, or the effort to check and prove them that many of them put it!)

Here’s an example in which the functions for the five output digits are split up: